I'm building Caffeine, a compiler written in Gleam that compiles service expectations to reliability artifacts. This post documents the migration from Deno to Bun and the tail call optimization rabbit hole that led there.

Built with Deno

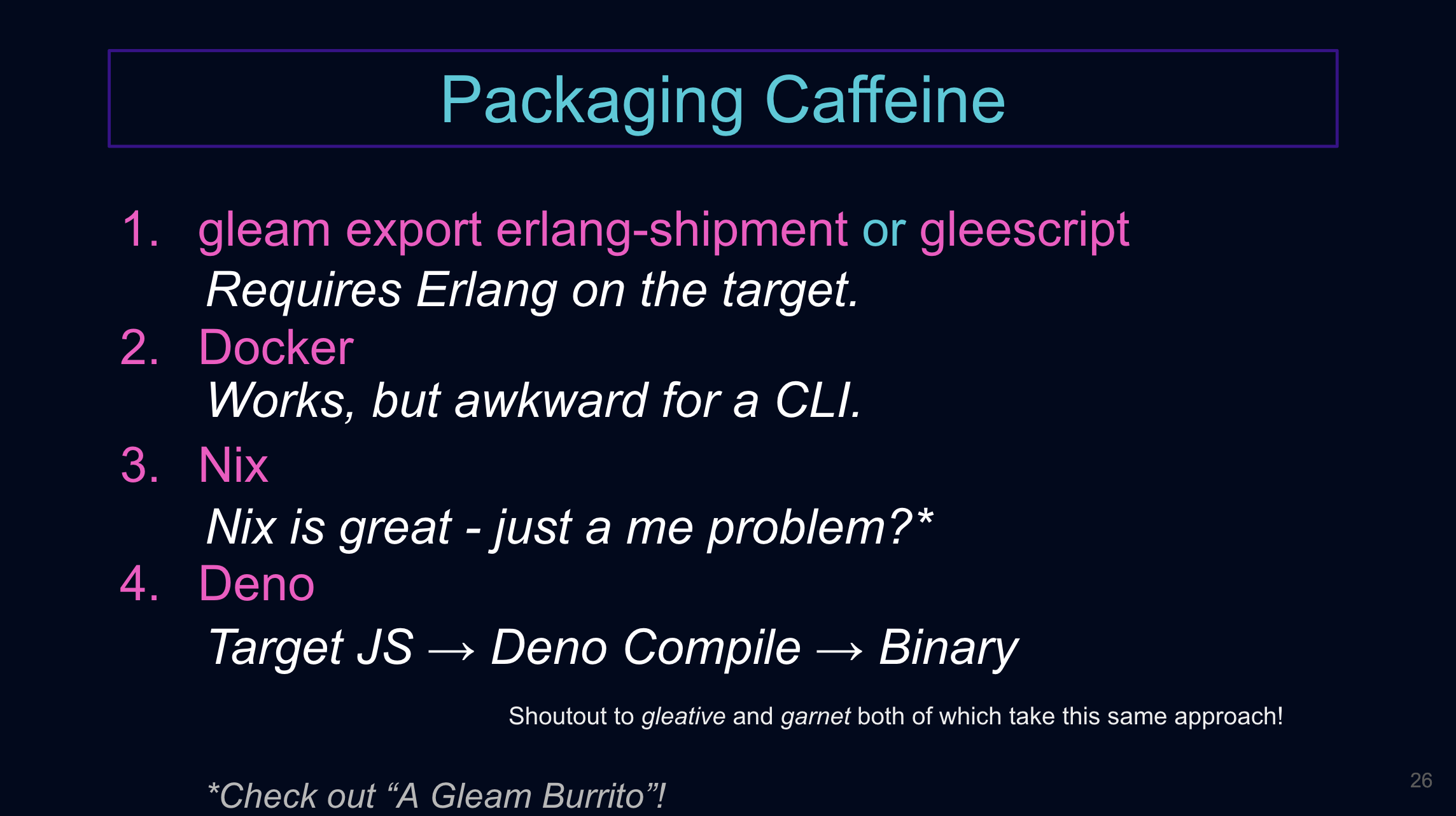

In my talk at Gleam Gathering, I have a slide where I discuss how I packaged up Caffeine:

Slide 26 from my Gleam Gathering talk, "Packaging Caffeine"

Most of the details can be found in this blog post if you're curious. TLDR is I couldn't quite get the nix setup to work so I ended up compiling to Javascript and then leveraging Deno to package it with a JS runtime. Some more details on this from their website.

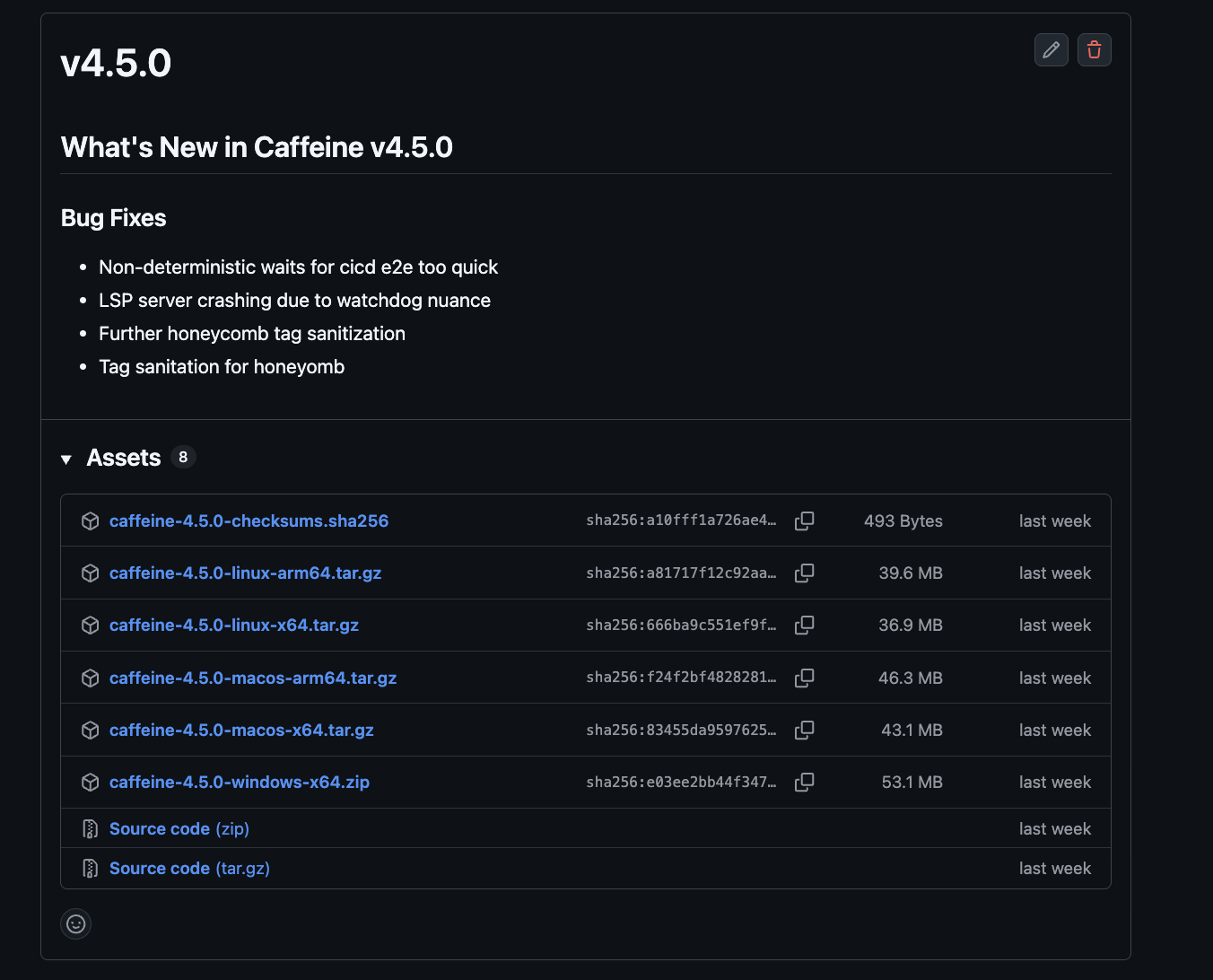

One of the downsides of this build setup is the size. Somewhat embarrassingly, I looked at the zipped/tarballed binaries and thought "eh 40mb not too bad right?":

Recent 4.5.0 release of Caffeine on Github

However, that's compressed. Here is the reality:

➜ brew info caffeine_lang

==> brickell-research/caffeine/caffeine_lang: stable 4.5.1

Caffeine programming language

https://caffeine-lang.run

Installed

/opt/homebrew/Cellar/caffeine_lang/4.5.1 (4 files, 121MB) *

Ooof 121mb. Not small. While relatively speaking that isn't the most offensively sized language toolchain, the actual

Caffeine compiler, CLI, and LSP together are only a small portion of the ~121mb. The rest is the Deno runtime

bundled along for the ride.

Well, for what it's worth this is a developer tool running on developer laptops

or within CICD pipelines. So the 121mb isn't a barrier to entry per se.

The more interesting question is speed and scale: within typical use cases, how fast will Caffeine compile and at what point does compilation become a noticeable bottleneck?

Setting Up Benchmarks

To begin answering these questions, I set up perf: various benchmarks and corpuses and such for caffeine.

The basic idea:

1. Generate a bunch of corpuses of Caffeine blueprints and expectations of varying sizes

2. Script the benchmarking of these corpuses using hyperfine

3. Setup CICD to continuously run these benchmarks in a controlled fashion

Ideally this process would be fairly deterministic. I wanted to control: (a) hardware (running within Github Actions), (b) corpus (consistent between runs), (c) warm-up (need to understand the JS runtime), and (d) build (should use actual Caffeine releases).

However, before going down the determinism rabbit hole, let's just get this setup first.

To bootstrap, I heavily leaned on Claude. I had it put together a corpus generator, wire up hyperfine, and build a script to compare against a baseline. For starters, I decided on the following corpus sizes:

┌────────────────┬────────────┬──────────────┐

│ Scale │ Blueprints │ Expectations │

├────────────────┼────────────┼──────────────┤

│ small │ 2 │ 4 │

├────────────────┼────────────┼──────────────┤

│ medium │ 5 │ 24 │

├────────────────┼────────────┼──────────────┤

│ large │ 20 │ 120 │

├────────────────┼────────────┼──────────────┤

│ huge │ 50 │ 600 │

├────────────────┼────────────┼──────────────┤

│ insane │ 50 │ 6,000 │

├────────────────┼────────────┼──────────────┤

│ absurd │ 50 │ 25,000 │

└────────────────┴────────────┴──────────────┘

Plus a set of single-blueprint scaling tests from 10 to 5,000 expectations to isolate how compilation time grows with expectation count.

Upon generation of these files, I wanted to format them: caffeine format .

➜ perf git:(main) ✗ caffeine format .

error: Uncaught (in promise) RangeError: Maximum call stack size exceeded

import * as $float from "../../../gleam_stdlib/gleam/float.mjs";

^

at tokenize_loop (file:///var/folders/.../tokenizer.mjs:1:1)

at emit_token_n (file:///var/folders/.../tokenizer.mjs:1:1)

at emit_token (file:///var/folders/.../tokenizer.mjs:1:1)

at tokenize_loop (file:///var/folders/.../tokenizer.mjs:1:1)

at emit_token_n (file:///var/folders/.../tokenizer.mjs:1:1)

at emit_token (file:///var/folders/.../tokenizer.mjs:1:1)

...

Not ideal (and this is before any actual benchmarking). The stack trace tells the whole story if you squint:

tokenize_loop → emit_token → emit_token_n → tokenize_loop → emit_token → .... An infinite-looking chain of mutual

recursion that ate through the entire call stack.

Tail Call Optimization

Ok, so what's actually going on here?

Within the functional programming paradigm, it's quite common for functions to call themselves recursively as the last step if some earlier base case isn't met. When a recursive call is the very last thing a function does (the "tail position"), a smart runtime can avoid pushing a new stack frame entirely, it just jumps back to the top of the function. This is called tail call optimization (TCO).

Consider a tail-recursive loop in Gleam:

fn sum_loop(list: List(Int), acc: Int) -> Int {

case list {

[] -> acc

[first, ..rest] -> sum_loop(rest, acc + first)

}

}

The recursive call to sum_loop is the very last thing that happens. There's no work left after it returns. That's what makes it a tail

call. Gleam compiles to both Erlang and Javascript. On Erlang's BEAM VM, tail calls are optimized natively as the BEAM was designed for this.

But on Javascript, it depends on the engine.

V8 does not currently ship proper tail calls. It previously had an implementation behind the --harmony-tailcalls flag around 2016 but disabled it and removed the flag due to developer tooling concerns.

A note on terminology: the ES6 spec mandates proper tail calls (PTC)

for strict-mode code, which is a language guarantee that tail-position calls will reuse the caller's stack frame. Since ECMAScript modules

are evaluated in strict mode, Gleam's ESM output is the right context for PTC. This is distinct from tail call optimization (TCO),

which is a discretionary compiler optimization. What Gleam's JS backend does (the while(true) loop) is TCO. What the spec requires is PTC.

The V8 team cited debugging concerns (tail-called frames disappear from stack traces) and chose developer experience over spec compliance. SpiderMonkey (Firefox) has never shipped PTC either (the tracking bug remains open).

Deno uses V8 and so Deno has neither PTC nor TCO for arbitrary tail calls.

What Gleam Does About It

Gleam's JS backend partially solves this with a compile-time loopification transform.

When it detects a function that directly tail-calls itself, it compiles it to a while(true) loop instead of

actual recursion, reassigning the arguments rather than making a new call. Constant stack space. Problem solved!

Except not always...

How It Breaks

The loopification only handles direct self tail-calls. Any recursion routed through another function (via a callback, thunk, or

intermediate call) won't be recognized by the transform. Gleam's use syntax makes this easy to hit. use is sugar for

passing a callback:

use #(item, state) <- result.try(parse_something(state))

parse_items_loop(state, [item, ..acc])

Desugars to:

result.try(parse_something(state), fn(#(item, state)) {

parse_items_loop(state, [item, ..acc])

})

Which compiles to something like this (simplified; Gleam's actual JS runtime uses different representations for Result and List, but the call structure is faithful):

function parse_items_loop(state, acc) {

return result_try(

parse_something(state),

(tuple) => {

let [item, state2] = tuple;

return parse_items_loop(state2, [item, ...acc]);

}

);

}

function result_try(result, callback) {

if (result.isOk()) {

return callback(result.value);

}

return result;

}

The recursive parse_items_loop call is in tail position of the closure, but not of parse_items_loop itself. From parse_items_loop's

perspective, the last thing it does is call result_try(...). And from result_try's perspective, the last thing it does is call

callback(...). Neither of those is a self-call, so neither gets optimized into a loop.

Every iteration adds multiple stack frames (parse_items_loop, result_try, and the callback closure) so recursion depth

grows by several frames per step. With 5,000 expectations, that's enough to exhaust V8's call stack.

Consider also Gleam's bool guards. Same pattern, different function. With use <- bool.guard(when: condition, return: Error(...)), the body after use becomes the

otherwise continuation, a closure. If your recursive call lives in that continuation, Gleam's JS loopification won't see it as a

direct self tail call. Same problem as result.try.

Mutual Recursion

This one is different. The tokenizer had a chain across three separate functions:

tokenize_loop → emit_token → emit_token_n → tokenize_loop

tokenize_loop matches a character, calls emit_token to record it, which calls emit_token_n to advance the position,

which calls back to tokenize_loop to process the next character. The final call is in tail position of emit_token_n,

and emit_token_n is in tail position of emit_token, and so on up the chain. But Gleam's JS backend only optimizes

self-tail-calls: function A calling function A. It can't see that this chain of three functions is effectively a loop.

This is a known open issue.

The Unwrapping

So I unwrapped everything. Replaced higher-order functions with direct case expressions so the recursive call lands in tail position of

the function itself.

For result.try:

// Before: closure hides the tail call

use #(item, state) <- result.try(parse_something(state))

parse_items_loop(state, [item, ..acc])

// After: direct case, recursive call in tail position

case parse_something(state) {

Ok(#(item, state)) -> parse_items_loop(state, [item, ..acc])

Error(e) -> Error(e)

}

The compiled JS goes from nested closures to something like (again, simplified):

function parse_items_loop(state, acc) {

while (true) {

const result = parse_something(state);

if (result.isOk()) {

let [item, state2] = result.value;

acc = [item, ...acc];

state = state2;

} else {

return result;

}

}

}

For bool.guard, same idea: replace the thunk with a plain case so the recursive call is direct.

For the mutual recursion in the tokenizer, I inlined emit_token and emit_token_n directly into tokenize_loop,

collapsing the three-function chain into a single self-recursive function. One function calling itself. Optimizable.

This is a real architectural tradeoff: one large function is harder to read and test than three focused ones. But on V8, it was that or crash.

The compiler stopped crashing. But I was fighting the engine and writing against the grain of the language.

Enter the Fine Folks Working on Safari

JavaScriptCore, the engine behind Safari and Bun, is the only major JS engine that implements proper tail calls per the ES6 spec, and has since 2016. They addressed the debugging concern with ShadowChicken, a shadow stack that lets Web Inspector reconstruct and display tail-deleted frames during debugging.

The key difference: V8 does not ship PTC, so tail-position calls still consume stack. JavaScriptCore implements PTC in

strict mode for all tail-position calls, not just self-calls but any call in tail position of any function. So that result_try → callback → parse_items_loop chain...

every call in that chain is in tail position of its caller. JavaScriptCore sees that and eliminates the frames. V8 just stacks them up

until it overflows.

Nothing against Deno here. But for a deeply recursive compiler written in a functional language, V8's lack of PTC was a dealbreaker. So I switched to Bun.

Bun Migration

The migration was small:

1. Remove npm: import prefixes from a handful of TypeScript files (the LSP server)

2. Swap Deno.args for process.argv.slice(2)

3. Change the build command

The compiled Gleam output is pure ESM that runs on any modern JS runtime (Node, Deno, Bun) without changes. I then undid all the manual unwrapping, restored result.try,

bool.guard, and the natural mutual recursion in the tokenizer. The code is cleaner and idiomatic Gleam again.

The Numbers

Here's v4.5.1 (Deno, with all the manual TCO workarounds) vs v4.6.0 (Bun, with natural recursive style restored).

A caveat: this isn't an apples-to-apples runtime comparison. The code changed too (v4.5.1 had manual TCO workarounds,

v4.6.0 restored natural recursive style). These numbers reflect the combined effect of switching runtimes and changing code.

To isolate the PTC effect you'd need to build the same commit for both runtimes, which I haven't done.

Complexity Benchmarks

┌──────────────────────────┬────────────────┬──────────────┬─────────┐

│ Corpus │ Deno (v4.5.1) │ Bun (v4.6.0) │ Ratio │

├──────────────────────────┼────────────────┼──────────────┼─────────┤

│ small (2 bp, 4 exp) │ 71.9 ms │ 60.7 ms │ 1.18x │

├──────────────────────────┼────────────────┼──────────────┼─────────┤

│ medium (5 bp, 24 exp) │ 149.2 ms │ 114.8 ms │ 1.30x │

├──────────────────────────┼────────────────┼──────────────┼─────────┤

│ large (20 bp, 120 exp) │ 625.5 ms │ 405.8 ms │ 1.54x │

├──────────────────────────┼────────────────┼──────────────┼─────────┤

│ huge (50 bp, 600 exp) │ 5.0 s │ 2.5 s │ 2.00x │

├──────────────────────────┼────────────────┼──────────────┼─────────┤

│ insane (50 bp, ~6K exp) │ 8.1 s │ 4.2 s │ 1.92x │

├──────────────────────────┼────────────────┼──────────────┼─────────┤

│ absurd (50 bp, ~25K exp) │ 26.3 s │ 13.5 s │ 1.95x │

└──────────────────────────┴────────────────┴──────────────┴─────────┘

Scaling Benchmarks (single blueprint)

┌──────────────┬────────────────┬──────────────┬─────────┐

│ Expectations │ Deno (v4.5.1) │ Bun (v4.6.0) │ Ratio │

├──────────────┼────────────────┼──────────────┼─────────┤

│ 10 │ 212.9 ms │ 146.5 ms │ 1.45x │

├──────────────┼────────────────┼──────────────┼─────────┤

│ 50 │ 354.7 ms │ 246.6 ms │ 1.44x │

├──────────────┼────────────────┼──────────────┼─────────┤

│ 100 │ 566.5 ms │ 376.1 ms │ 1.51x │

├──────────────┼────────────────┼──────────────┼─────────┤

│ 500 │ 2.1 s │ 1.4 s │ 1.55x │

├──────────────┼────────────────┼──────────────┼─────────┤

│ 1,000 │ 4.0 s │ 2.6 s │ 1.56x │

├──────────────┼────────────────┼──────────────┼─────────┤

│ 2,500 │ 10.6 s │ 6.6 s │ 1.60x │

├──────────────┼────────────────┼──────────────┼─────────┤

│ 5,000 │ 26.0 s │ 13.6 s │ 1.92x │

└──────────────┴────────────────┴──────────────┴─────────┘

Anywhere from 1.2x to 2x faster depending on corpus size. The ratio grows with corpus size, which is consistent with PTC benefiting deeper recursion. But again, this is a confounded benchmark, not a controlled experiment.

Binary Size

➜ brew info caffeine_lang

==> brickell-research/caffeine/caffeine_lang: stable 4.6.0

Caffeine programming language

https://caffeine-lang.run

Installed

/opt/homebrew/Cellar/caffeine_lang/4.6.0 (4 files, 62.1MB) *

Down from 121mb to 62mb. Nearly half. As a bonus Bun's runtime is also significantly smaller than Deno's.

Conclusion

If you're compiling a functional language to JavaScript and targeting V8, recursion patterns matter. Gleam's loopification handles direct self-calls, but anything routed through callbacks or mutual recursion will consume the stack on any engine that doesn't implement PTC. Right now, only JavaScriptCore does, so if you're using a tool to compile to a binary, Bun is the way.